Recently Vinod Kurpad delivered a presentation on using AI to build document processing workflows. The presentation slides can be found at https://on24static.akamaized.net/event/34/60/86/0/rt/1/documents/resourceList1636579433122/useaitobuilddocumentprocessingworkflows1636579401517.pdf

By using AI, several boxed fields (i.e. SSN) on a forma can be treated as 1 field. It allows dynamic entities, like tables, to recognize multiple rows in a single table with various column types.

Listed below are questions and answers discussed during the presentation:

- Could the Form Recognizer be used in Power Apps for receipt processing? Instead of using the AI Builder SKU that comes with Power Apps at an additional licensing cost

Form Recognizer is available in Power Apps and has a receipt processing app in Power Apps. Licensing model in this scenario is via Power Apps. https://powerapps.microsoft.com/en-us/blog/process-receipts-with-ai-builder/

- Is it possible to detect if a line of text on a document is a 'header' of the document section? For example, a form where customer data is entered might have a header above it that says "Customer Information".

Not yet, Layout currently supports text, checkboxes, selection marks and reading order. Paragraphs, headers and more are on the roadmap.

- Can the output from the model be XML?

Currently the only supported format is JSON. But there are a number of JSON to XML converters that should enable you to convert the output to XML

- Is there any plan to support "reading order" when there are multiple columns of text (or an inset section within a larger body of text)

Reading order already supports multiple columns. Try it out :)

- For the receipts, would you be able to extract the information in such a way, that you could later write a query to calculate total amount for a given date?

Form Recognizer receipts returns all line items, date, total etc. You can write validations and additional logics on top of the output as post processing. See here for all fields extracted from receipts - https://docs.microsoft.com/en-us/azure/applied-ai-services/form-recognizer/concept-receipt

- Is there any plan to recognize paragraphs?

It's on the roadmap for a preview in the first half of next year.

- If you are interested in seeing Azure Form Recognizer applied to Financial Services, a great example is linked below:

https://customers.microsoft.com/en-us/story/financial-fabric-banking-capital-markets-azure

- What is the lowest DPI that this can read?

When using Form Recognizer no pre-processing of the images are needed. It supports low dpi and various image qualities.

- A key value pair can be a field text label and a field value?

Yes, a key value pair can also be a value with no explicitly labeled key, but a span of text that describes the value.

- can it recognize handwritten forms?

Yes, Form Recognizer supports handwritten in the following languages - English, Simplified Chinese, French, German, Italian, Portuguese, Spanish. see all supported languages printed and handwritten here - https://docs.microsoft.com/en-us/azure/applied-ai-services/form-recognizer/language-support

- which languages does it current support

https://docs.microsoft.com/en-us/azure/applied-ai-services/form-recognizer/language-support

- what are the supported file formats

Form Recognizer supports the following file formats - PDF (digital and scanned and multi-page), Tiff, bmp, jpg, png

- Does the solution skew, rotate and adjust poorly scanned forms?

Yes it does. No pre-processing needed. Please send the source document to Form Recognizer as is and Form Recognizer will do all the work for you.

- Are you considering combining this with voice recognition to enable the creation of printed transcripts from recorded interviews?

Cognitive Services also includes speech to text and transcription APIs that you can combine for interviews.

- My first time with Form recognizer but I am getting this error while configuring a resource - Operation returned an invalid status code 'Conflict' Form Recognizer Studio

Try creating a resource with a different name and see if that helps- the Studio is continually improving after the recent preview. Also send an email to formrecog_contact@microsoft.com

- Can Form Recognizer handle PDF's with FDF's included? For example, a fill-in form that includes signatures (which are not fill-in).

Form Recognizer includes signature detection capability, you can train a model to detect if a signature is detected or not.

- Do documents need to be OCR-ed? I am guessing it can't read pdf's with text in them, but embedded as images?

The Form Recognizer features (APIs) build on top of OCR and handle digital, images and hybrid documents transparently.

- For knowledge-mining of documents, in the example, it showed some results from a search with a synopsis of what I perceive are different documents. How does one then get to the specific document from the text provided (e.g., hyperlinked)?

The demo showed the specific document rendered in the browser, you can add a URL field to the index which is a pointer to the location of the original document

- Is it possible to label a table that spans across multiple pages in the custom model?

Not yet, on our roadmap but not available yet. Currently you should split the document into pages in such case and send the individual pages to Form Recognizer and then unite them in post processing. For invoices and line items tables that span multiple pages are supported.

- Is it possible to open a fott project in Form recognizer studio?

Yes, Form Recognizer enables opening an FOTT project and Form Recognizer supports backward compatibility and you can use 2.1 release models in the new release also.

- Can you talk about what I would do if I get different form types and don't know which one I'm receiving. (I'm thinking of data from a doctor's office, and they send all sorts of different forms)

If you know the different form types you expect to receive, you should be able to train a model for each form and compose all the individual models into a single logical model. Sending a document to this logical model will result in the document being classified to determine the right model to extract the fields. The selected model is also returned as part of the response

- Do all the documents need to be on Azure for Azure knowledge-mining to work, or can they be left on the existing Microsoft server and be auto-identified/updated?

No data can be anywhere and you can stream the document to Form Recognizer for analyzing or send a URL. Only your training data to train a custom model needs to be in a blob and only 5 documents are needed to get started with custom and train your own model

- Is there a max file size?

Max file size is 50MB currently.

- How would you train 2nd & 3rd pages of documents?

The Studio and the underlying files handle multi-page labels and navigating across pages to label the data you want to extract.

- Can you train the model with only 1 sample of a variation?

To train a model all documents within a project and a model needs to be from the same type, same format. You can then compose several models into a model composed so you do not need to classify the document before sending it to Form Recognizer. You need 5 forms from the same type to train a model.

- WoW, the form has handwritten text Will it be challenging for training the model since handwriting can be very different from individuals?

Form Recognizer text extraction supports print and handwritten and we just expanded handwritten from English to Simplified Chinese, French, Italian, Spanish, German, and Portuguese

- Can it handle files with hundreds of multipage documents?

Yes, Form Recognizer supports multipage documents.

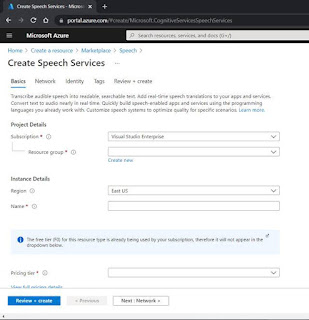

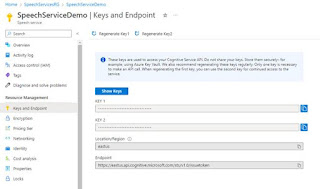

- Is there a Resource provider that is necessary to be registered in Azure?

Form Recognizer is the resource you need to create in the portal

- What is a FOTT project?

The previous version of the labeling tool that's now superseded by the new Form Recognizer Studio -https://fott-2-1.azurewebsites.net/

- What if tables have sometimes 4 or 5 columns? And the headings of those tables are the same, but sometimes they appear as A,B,C, D and sometimes A, D, C, B

Layout extracts tables as they appear on the document and will try to extract all rows and columns including indicate merged rows and columns spans. When labeling tables if the table changes and has a variety numbers or rows or columns you can label it as a dynamic table.

- Can form recognizer handle handwritings ?

Yes, Form Recognizer supports handwritten text in the following languages - English, Simplified Chinese, French, German, Italian, Portuguese, Spanish. see all supported languages printed and handwritten here - https://docs.microsoft.com/en-us/azure/applied-ai-services/form-recognizer/language-support

- How do you open an existing FOTT project in Form Recognizer Studio?

Connect to the same blob storage and the project will open with all the data and labeling completed.

- Is it safe to assume that this will be added to the power automate stack?

AI Builder which is a part of the Power Automate stack uses Form Recognizer and you should be able to use the same set of capabilities in AI Builder as well.

- If yes, how accurate can form recognizer translate the handwritings into text ? did you benchmark it against medical notes - type of handwritings

We suggest benchmarking it on your content but we believe and customers have validated that the handwritten OCR is world class for the supported languages, and yes Form Recognizer and OCR tech are heavily used in healthcare and financial verticals that involve processing handwritten text

- If there are variations within a document over some years, how does the Form Recognizer handle such variations?

When training a custom model if the variations are slight a key name changed, moved etc. than Form Recognizer should be able to extract, if you see that it misses than you can add a few documents with the variation to the model and improve the model. If the variation is big than you should train a model per year and then compose them to a model composed.

- Are there any additional examples documentation or videos available or sandbox?

You can start with the documentation https://docs.microsoft.com/en-us/azure/applied-ai-services/form-recognizer/ . Also note that Form Recognizer supports a free tier which should allow you to test all the different features without paying for it

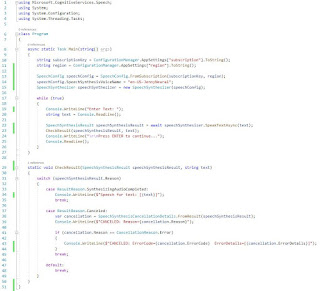

- Can I create custom code to integrate custom functionality in the workflow using C#?

Yes! Form Recognizer supports a few different languages with an SDK, but the REST API is available for languages not supported by an SDK

- How well does it generalize tables that don't have a strict row column format. For example, maybe some table cells are split cells that contain multiple fields?

Form Recognizer supports split cells, merged cells tables with no borders and complex tables. See more details on table extraction int he following blog https://techcommunity.microsoft.com/t5/azure-ai/enhanced-table-extraction-from-documents-with-form-recognizer/ba-p/2058011

- Can form recognizer identify tables when the tables are not delimited, but the columns are easily seen and organized?

If you mean concatenated or complex tables, then yes, we are continuously improving on extracting those. On the other hand, table extraction supports tables with or without physical lines.

- Is there any way to use this with power automate with clients that are on another 365 domain?

Form Recognizer is integrating within Power Apps see Form Processing apps. In reference to another 365 domain can you please reach out to Form Recognizer Contact Us and we can try to assist